The New Default. Your hub for building smart, fast, and sustainable AI software

Machine Learning is currently one of the hottest topics in the industry and companies have been racing to have it incorporated into their products, especially apps. This topic is especially important when discussing Machine Learning in Python.

And small wonder, because this particular branch of computer science allows us to achieve things we couldn’t even dream about before.

But what does it actually do? For example, Airbnb uses it to categorize room types based on images to enhance its user experience. Carousel harnesses image recognition to simplify the offer posting experience for the sellers, while buyers can find better listings thanks to an ML-powered recommendation system. Swisscom used machine learning for data analysis to predict the intent of their customers with text classification. The list goes on and on and the number of companies out there using this particular branch of computer science to make their products better will continue to grow.

Chances are you too are thinking about incorporating Artificial Intelligence in the form of into your product. Before you start, however, you will have to do your due diligence to choose the proper tools and technologies for the job. Which ones are best suited for the task before you?

Let’s answer this question by first defining what the individual parts of the Machine Learning model development are.

What is Machine Learning?

To be honest, from a theoretical standpoint, Machine Learning is nothing more than a combination of calculus, statistics, probability, and a lot of matrix multiplication, with a lot of thinking added into the mix. The primary goal of the whole process is to create a model that performs specific tasks without using explicit instructions.

During the development process, the developer has to choose a proper model architecture and cost function (which will be minimized by using partial derivatives) for a given problem. This will allow us to make the model perform the tasks we want. However, before it can do so, it will have to preprocess the data it’s fed, once again using processes best suited for the issue the model was selected to deal with. These can involve image scaling, data denoising, splitting text into tokens, removing corrupted data, and many more.

We should also mention here that further changes can be made to the data in the course of training—this time, for the purpose of making the dataset bigger, a process called data augmentation.

The stages listed above also imply a handful of things that can be helpful to us in the development process. The issues we need to take into consideration include:

code readability—math can be complicated, so it’s better not to make it even more difficult to understand with language syntax,

execution speed—it’s important for all these calculations not to take too long,

rapid development—rather essential because we want our product built as quickly as possible.

Let’s dive a little deeper and choose the relevant tools!

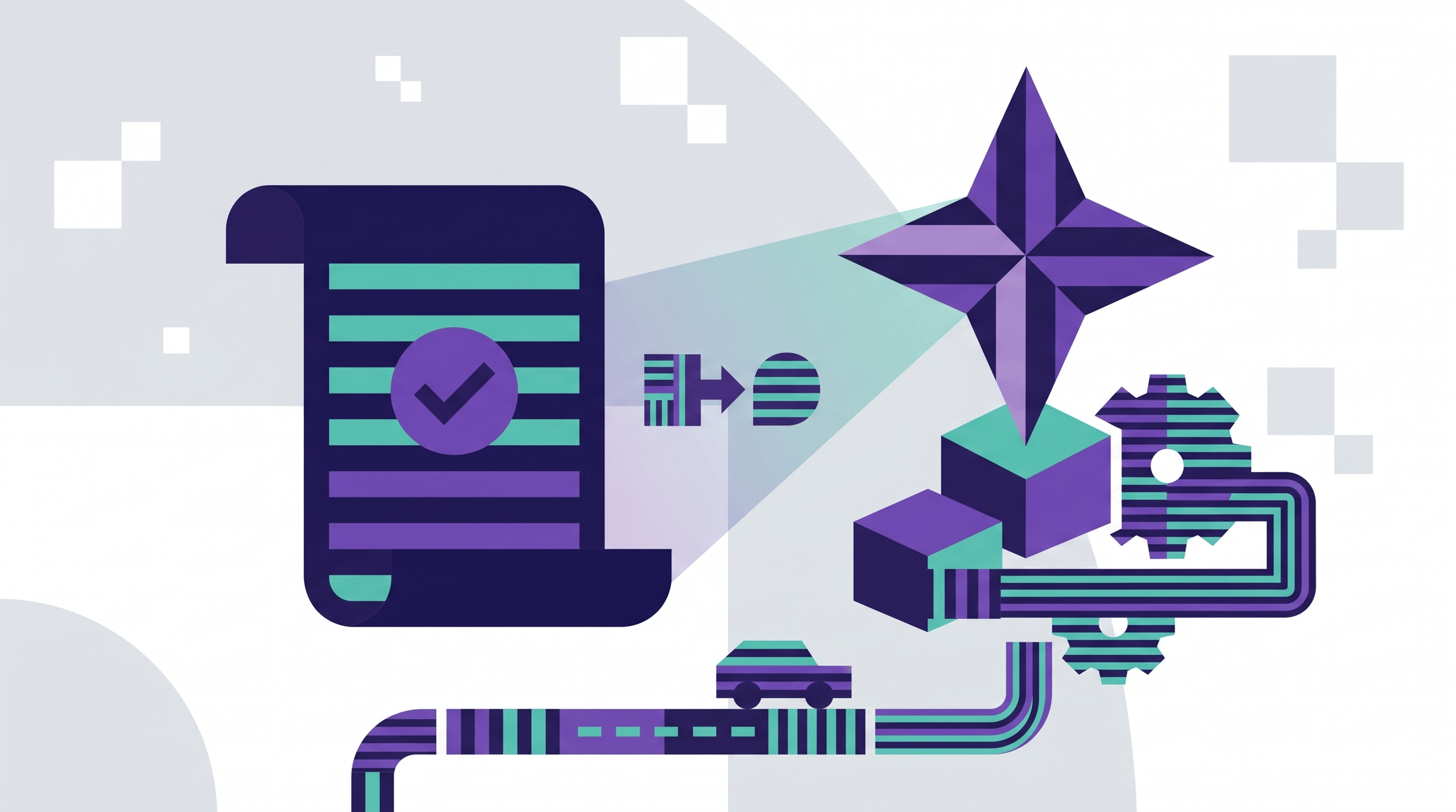

A simplified process of creating a model with supervised learning by predicting whether it’s Tom or Jerry. Source: https://www.edureka.co/blog/introduction-to-machine-learning/

Code readability

Because machine learning involves a veritable tangle of math—sometimes quite difficult and unobvious—the readability of our code (not to mention external libraries) is absolutely essential if we want to succeed. We’d like developers to think not about how to write, but what to write instead.

One great example here is the problem that machine learning models often silently fail to address—namely that while the code can be correct in terms of syntax, the math underpinning can still be incorrect and not work properly (you can read more about this in a great article by Andrew Karpathy, which also features a number of tips on how to fight it).

On top of that, dealing with typos (in static typed programming languages) can sometimes be painful and time-consuming.

Because of the reasons outlined above, I’d suggest using dynamically typed technologies, like Python, JS, or Ruby here. Out of the three, Python is best known for its simple syntax and the emphasis it puts on code readability, as declared in The Zen of Python:

The Zen of Python. Source: Python.org

Python’s advantages in terms of code readability

Python programmers (we call ourselves Pythonistas) are keen on creating code that’s easy to read. Heck, you’re probably even familiar with the fact that this particular language of ours is very strict about proper indentations :)

Another of Python’s advantages is its mulitparadigm nature, which again enables developers to be more flexible and approach problems using the simplest way possible.

Discussing readability, however, we cannot ignore what constitutes the right environment for machine learning development. Here, Python truly shines among its fellow programming languages. Furthermore, Jupyter Notebook or Google Collab offer Pythonistas the great option of running their code in cells. From my experience, a pipeline split this nicely makes the code even more readable.

Additionally, both these tools allow us to create great visualizations for data analysis inside the notebook. Charts, graphs, images, etc. help developers decide what to do with their data and display their results in an explicit manner, under the snippet responsible for rendering them.

Last but not least, one great thing about both Jupyter Notebooks and Google Collab is that if something doesn’t work, you don’t have to run the whole script again, just repair the block that’s faulty and go on. In my opinion, this is extremely useful when testing data cleaning functions or applying some transformations, since it’s enough to read the data only once.

(I believe there is a possibility to run a different code than pythonic in Jupyter. Except R and Julia, other kernels are maintained by the community, so from my point of view, there is a possibility that they can be outdated)

Example of Jupyter notebook use—data visualization, executing snippets individually, adding formulas, and a great visual effect. Source: https://www.dataquest.io/blog/jupyter-notebook-tips-tricks-shortcuts/

Speed of execution

Let’s continue deeper into the math jungle. Why? Because apart from being readable, our formulas also ought to be executable within a reasonable amount of time. Machine Learning, especially Deep Learning, a subset of machine learning using Deep Neural Nets, is widely known for its lengthy model training sessions, which can take anywhere from a couple of hours to a couple of days.

Training models takes a lot of time, that is why execution speed is crucial in this branch of computer science. Source: https://pl.pinterest.com/pin/805229608345568643/

You can try to cut down their length in a variety of ways. One basic technique involves using pre-trained models for transfer learning. Anyway, some tools can also help us, and we’ll be talking about them in the next section.

Python’s advantages in terms of execution speed

Common wisdom suggests that in this field Python could never hope to match C or C++. But is indeed so, are the two unsurpassable winners? Well, not necessarily!

They will undoubtedly win out over Python if we don’t call any external libraries. But what happens if we do? In such a case, Python basically becomes a wrapper for C/C++ code! Modules like numpy (for numerical operations, like matrix multiplication), opencv, PIL (both for image preprocessing and augmentation), Tensorflow, and Pytorch (the most popular frameworks for DL) and others—all of them are written in those languages. Even some of the widely used modules, like pandas, are great for data preprocessing. Then we have Keras, which simplifies using Tensorflow; or scipy, for scientific computing—they, too, are built on top of libraries mentioned before.

This clearly shows us that the speed of execution in Python can be similar to other languages, and the real difference generally lies in the quantity and quality of the Machine Learning modules used.

Let’s move on and discuss this issue in the next section.

(If you’re interested, you can look into comparing DL frameworks since it lies somewhat outside the scope of this article. However, as you will see, the difference between Tensorflow and Pytorch is not all that huge.)

In general, overall development time is much more important than execution speed. This statement may not be exactly true in the case of Machine Learning, but as we learned in the previous section, libraries can do wonders for us in terms of speeding up our computations. This is important, because if a team does not develop your app rapidly while maintaining good quality, you can lose out on a lot of potential business opportunities.

Here, let’s take into consideration the three most popular technologies which we can use for Machine Learning development in 2019, according to Stack Overflow: JavaScript, Python, and Java.

The most popular technologies, Stack Overflow, 2022. Source: Stack Overflow

The amount of code we need to solve a problem can be crucial for the swiftness of the development process. Here, Python and Javascript vastly outstrip the majority of their fellow languages, including Java. It’s clear even when looking at very basic scripts, like “Hello World.”

“Hello World” in Java

In Python

In JS

Most of the time, however, we’ll be dealing with problems much more complex than “Hello World” :) That’s when external modules will become helpful. Not only do they speed up execution, as we’ve already described in the previous section, they also make our code shorter. When using these modules, a lot of work happens behind the scenes.

In terms of the number of packages available, although all three languages offer a very broad selection, JS remains the true number one. npm hosts the largest registry of open-source packages among the three, followed by PyPI for Python and Maven Central for Java.

Python’s advantages in terms of development swiftness

Lest we forget, in the Machine Learning world everything moves fast. New ideas and observations bubble up everyday, and modules are usually developed in Python first. That’s because a large portion of scientists and researchers simply prefer Python over JS or Java.

That’s also quite the advantage for Pythonistas—they can make use of the newest developments in Artificial Intelligence, hence their models can make their predictions much more accurately, learn faster, etc. This means that the prospective user can get their app in a much shorter time frame, while the app itself can offer a much better user experience.

Communities

Last but not least, there are the communities. And their support can be priceless sometimes. All three languages can proudly say that the developers making up their respective communities are absolutely special and there are no clear winners here.

In this instance, however, we should also take into account the communities behind particular Machine Learning frameworks. In my experience, asking about solutions for Python’s Tensorflow produces the fastest responses—partly thanks to the fact that it’s a Google product, but also because it’s the most popular tool for Deep Learning :) Programmers are provided with great Stack Overflow support, plenty of videos on Youtube, and there are tons of tutorials for newcomers provided by the Tensorflow team itself.

Survey polling data scientists’ preferred libraries. Only Python libraries are shown. Source: https://www.jetbrains.com/research/data-science-2018/

Is Machine Learning only for Python?

Clearly, Python is not the only language you can use for your Machine Learning project. JS and Swift, the latter not even mentioned here (despite its growing popularity, driven, to some extent, by the recent release of Tensorflow for Swift), are also great for creating AI-powered algorithms. The serpentine language, however, clearly outshines its competition. Why?

Compared to other developers, Pythonistas have better access to the latest and hottest discoveries and ideas in Machine Learning, thanks to the broad usage of the language in academia.

There are plenty of resources that make it easy to develop in Machine Learning using Python, probably more than for any other language—Tensorflow being just one example.

When using proper tools (such as PyTorch, Tensorflow, or pandas), Python developers have no trouble achieving great execution speed by making their beloved programming language a wrapper for C++ code.

If computing speed is not the issue, code readability can be the thing that clinches the case in favor of going with Python for Machine Learning. Its advantages in this area are directly reflected in development swiftness, ease of bug fixing, and the ability to focus on experimenting with various models. That’s why you get the product earlier, spend less money on its development, while still maintaining top-shelf quality.

So, what are you waiting for? Knowing which technology comes recommended, you’re ready to start your project! Good luck!

P.S. Remember, though—sometimes it may turn out that you didn’t need Artificial Intelligence for your project at all. That’s nothing to be worried about, can still make use of Python for a variety of different tasks!

Read more about Python and why it is so popular.

Is Python a good choice for your project?

Our team of experts is here to help you choose the right technology stack for your next project.

)